Roblox just flipped the game development model upside down. Not “AI-assisted” in the polite, copilot sense — we’re talking an AI that plans your game, builds 3D assets on command, runs QA overnight, and hands you a pull request in the morning. This is what agentic game dev looks like in the wild.

The Story

On April 16, 2026, Roblox dropped a deceptively major announcement: Roblox Studio is going agentic. The platform that built its empire on user-generated content — 88 million daily active users, millions of creator-built games — just injected a full agentic AI loop into its development environment.

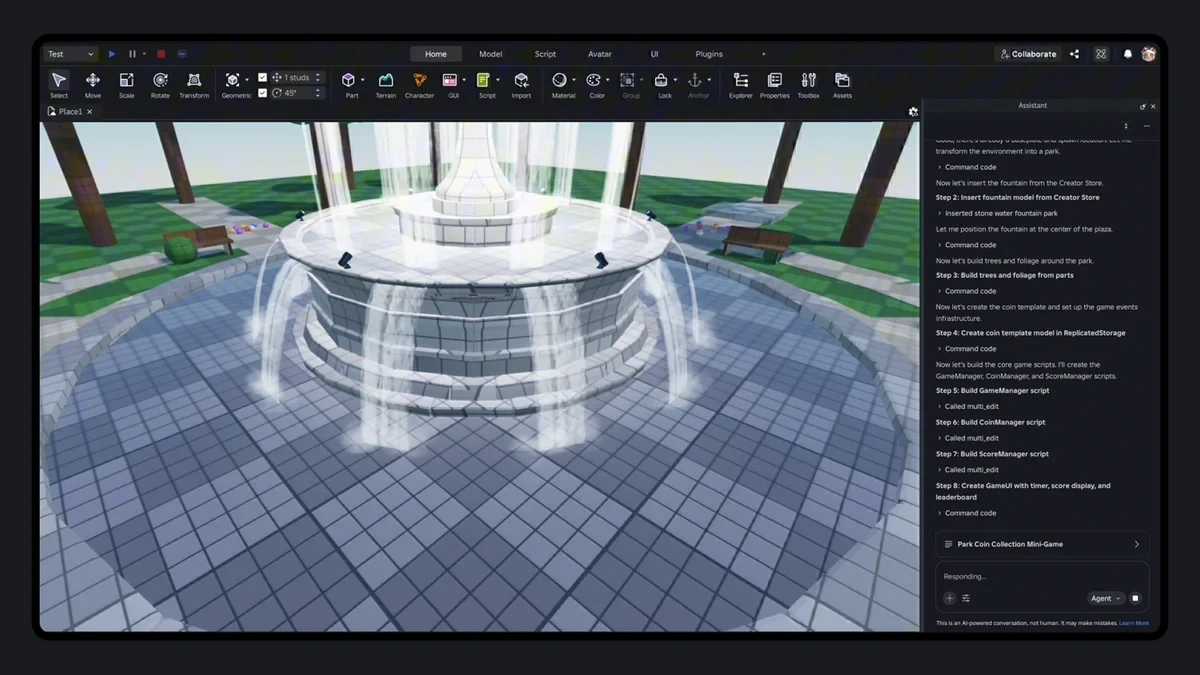

The new system isn’t a chatbot that helps you write Lua code. It’s a multi-phase autonomous agent that can take a vague creative brief and execute it across the entire development pipeline: planning → building → testing → self-correcting.

Planning Mode

The upgraded Roblox Assistant now acts as a collaborative architect. It digs into your game’s existing codebase and data model, asks clarifying questions about your intent, and converts that conversation into an editable, structured action plan — a task manifest that can be executed in parallel. You review it, tweak it, then hit go. No more flying blind on what the AI is about to do.

Mesh Generation

Type a prompt, get a fully textured 3D mesh dropped directly into your game world. Built on Roblox’s own Cube foundation model, Mesh Generation lets creators replace early-stage placeholder blocks with actual detailed assets without ever leaving Studio. During early access, over 160,000 objects were generated — and games using the feature saw a 64% increase in average play time. That’s not a marginal improvement. That’s players staying because the world finally looks like it was meant to be there.

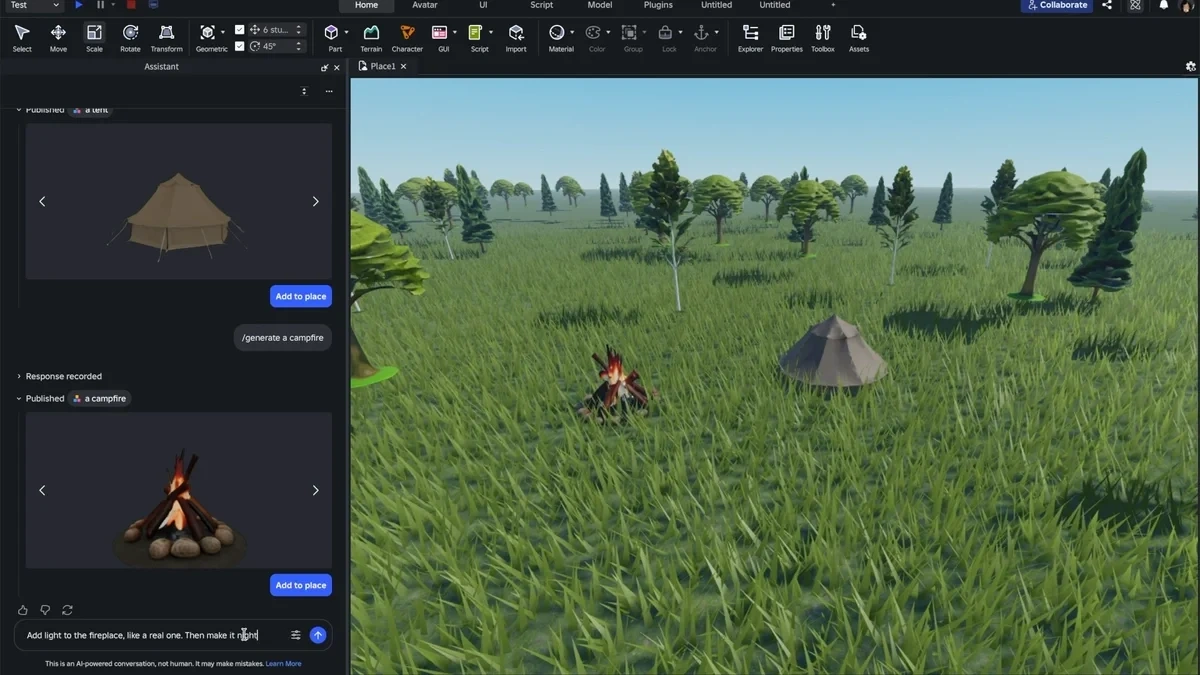

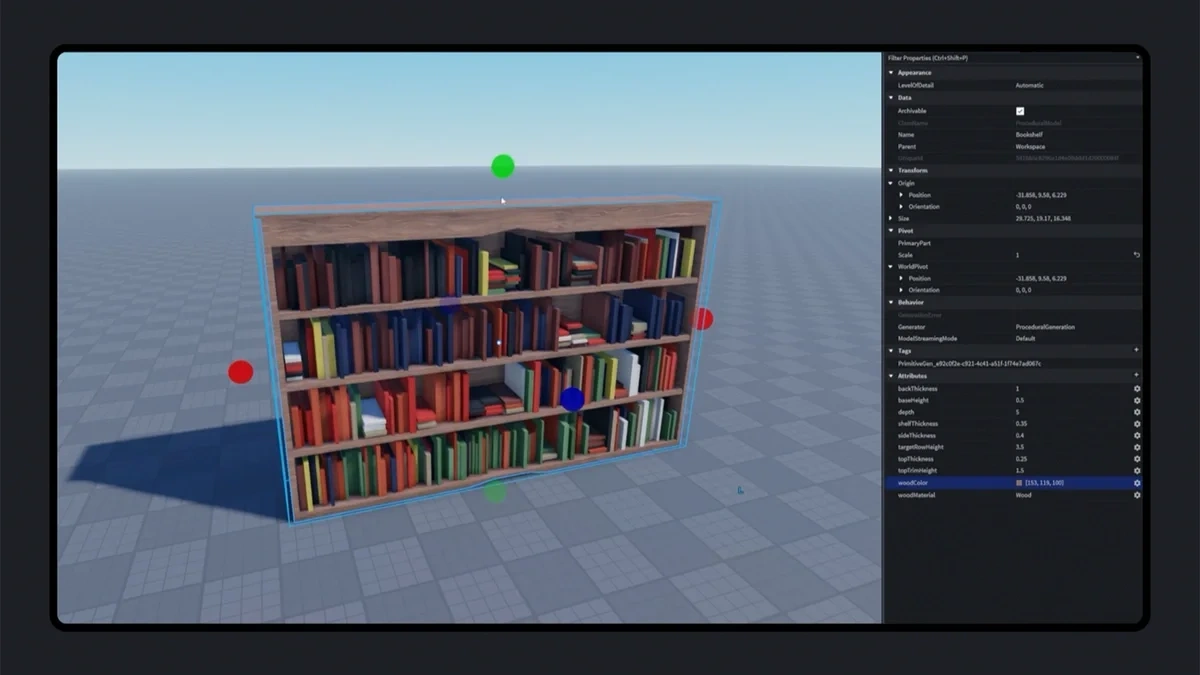

Procedural Model Generation

This is where it gets genuinely interesting for 3D creators. Procedural Models aren’t static meshes — they’re relationship-aware 3D objects defined by parameters. A staircase knows how its step count relates to its total height. A bookcase knows its shelves distribute based on the frame. Generate via text or image prompt, then adjust dynamically. No manual re-rigging when the level layout changes.

Playtesting Agent (Beta)

The AI can now actually play your game. The Playtesting Agent reads logs, captures screenshots, simulates player inputs, identifies bugs, surfaces suggested fixes — and feeds everything back into the next planning loop. It’s a self-correcting QA system that runs while you sleep. Malt, creator of Solo Hunters, put it simply: “Community members could surface bugs overnight, and my AI system could complete tasks. When I wake up, I’d check pull requests to integrate into the game.”

Why You Should Care

Roblox has 88 million daily active users and a creator economy that generated over $900M in developer payouts last year. When a platform this size goes all-in on agentic AI tools, it’s not an experiment — it’s a statement about where game development is going.

Consider what’s actually happening here: 44% of the top 1,000 Roblox creators are already using Roblox Assistant or third-party AI tools via MCP. These aren’t early adopters dabbling in AI — these are production creators who’ve decided the tools work well enough to ship with. And the platform is doubling down on that bet.

The MCP integration piece deserves special attention. Roblox Studio now natively talks to Claude and Cursor via the Model Context Protocol — meaning you can bring your own AI tools into the loop alongside the native ones. It’s not a closed garden. The platform is becoming programmable infrastructure for AI-augmented game creation.

What’s coming next is even wilder: multiple AI agents working in parallel, cloud-based complex workflows, enhanced character generation, and cross-session memory so the AI remembers your game across projects. The solo dev who wakes up to completed pull requests isn’t a fantasy scenario anymore. It’s next quarter’s feature.

Try It / Follow Them

- Roblox Studio — create.roblox.com — free, download Studio and access Roblox Assistant directly in the editor

- Official Announcement — about.roblox.com — full feature breakdown with demos

- Mesh Generation Early Access — available now via Roblox Creator Hub

- TechCrunch Coverage — techcrunch.com — good breakdown of the technical architecture

IK3D Lab Take

The demo numbers don’t lie: 160,000 objects generated, 64% more playtime. That’s not AI hype — that’s AI that actually moved the needle for real creators shipping real games. What Roblox is building is essentially a CI/CD pipeline for game dev, but instead of code compiles and unit tests, it’s plan → build → playtest → commit. Applied to 3D worlds.

For architects, game devs, and 3D generalists watching from the sidelines: this is the workflow coming to every major creation tool in the next 18 months. Roblox just has 88 million daily reasons to ship it first. The Procedural Model Generation angle in particular is worth watching — relationship-aware 3D that adapts when you change one parameter is the dream that every parametric design tool has chased for a decade. When a game platform ships it as an AI prompt feature, the conversation changes.

IK3D verdict: This is production-grade, not vaporware. If you haven’t opened Roblox Studio in the last two years thinking it’s for kids — open it again. The toolchain just grew up.