Four months after their debut model, a small German lab nobody outside Twitter had heard of just dropped Echo-2 — and on their own benchmarks it walks past Marble 1.1, HunyuanWorld 2.0 and NVIDIA Lyra 2.0. One image in. One coherent, navigable, splat-rendered world out. In your browser. On modest hardware. We need to talk about SpAItial.

The Story

SpAItial AI shipped Echo-2 on April 28, 2026, and they did it without the trillion-dollar war chest of Fei-Fei Li’s World Labs or the Tencent-scale GPU budget behind HunyuanWorld. The model is what they call a physically-grounded world model: feed it an image or a text prompt, and instead of vomiting frames like a video diffuser, it builds a single, spatially persistent 3D scene representation that captures geometry and appearance in one unified layout.

That distinction matters more than it sounds. Video-based world models — and yes, that includes most of the heavyweights we’ve covered this year — generate frame after frame and pray the geometry doesn’t drift. It does. Walls bend, ceilings warp the moment you turn around, and any kind of edit becomes a wrestling match with temporal hallucinations. Echo-2 just… doesn’t do that. The world is the world. You walk around. Geometry stays put.

The output is a 3D Gaussian Splatting scene. GPU-friendly, browser-ready, no special viewer required. The web demo runs on modest hardware — that’s their wording, and judging by the demo on a 2021 laptop GPU, it’s honest. Distill it down to meshes or point clouds for whatever pipeline you live in: Unreal, Unity, Blender, robotics sim, doesn’t matter.

Why You Should Care

The interesting part isn’t the leaderboard — vendor benchmarks always favor the vendor. The interesting part is what Echo-2 does between generation and editing.

It predicts semantic segmentation masks on the scene itself. Walls, floors, chairs, tables, lamps — every component gets a discrete identity inside the splat representation. Which means you can prompt “remove that ugly couch,” “swap the dining table for something Scandinavian,” or “restyle the whole apartment in 1970s brutalist” and the rest of the geometry stays globally coherent. That’s the kind of object-level surgery 3DGS scenes have been begging for since the format went mainstream.

- Virtual staging — drop furniture into an empty room from a single photo. Real-estate workflows just got automated.

- Floorplan-to-3D — upload a 2D plan, get a fully consistent navigable architectural world out the other end. Architects, look up.

- Style transfer at scene scale — re-skin a whole environment with one prompt without breaking spatial layout.

- Robotics & digital twins — clone a factory hall or a kitchen from a phone snap and use it as training data. No LiDAR rig, no photogrammetry studio.

And Echo-2 is explicit about what’s next: temporal consistency and physics-based reasoning. In other words, scenes that not only hold their shape but also obey gravity, collide, simulate. That’s the on-ramp to interactive simulation, robotics training, and games where the world is generated, not authored.

Try It / Follow Them

- Web demo: spaitial.ai (free trials, no install)

- Release post: Echo-2: Physically-grounded 3D World Generation

- Twitter / X: @SpAItial_AI

- Coverage: Radiance Fields write-up

IK3D Lab Take

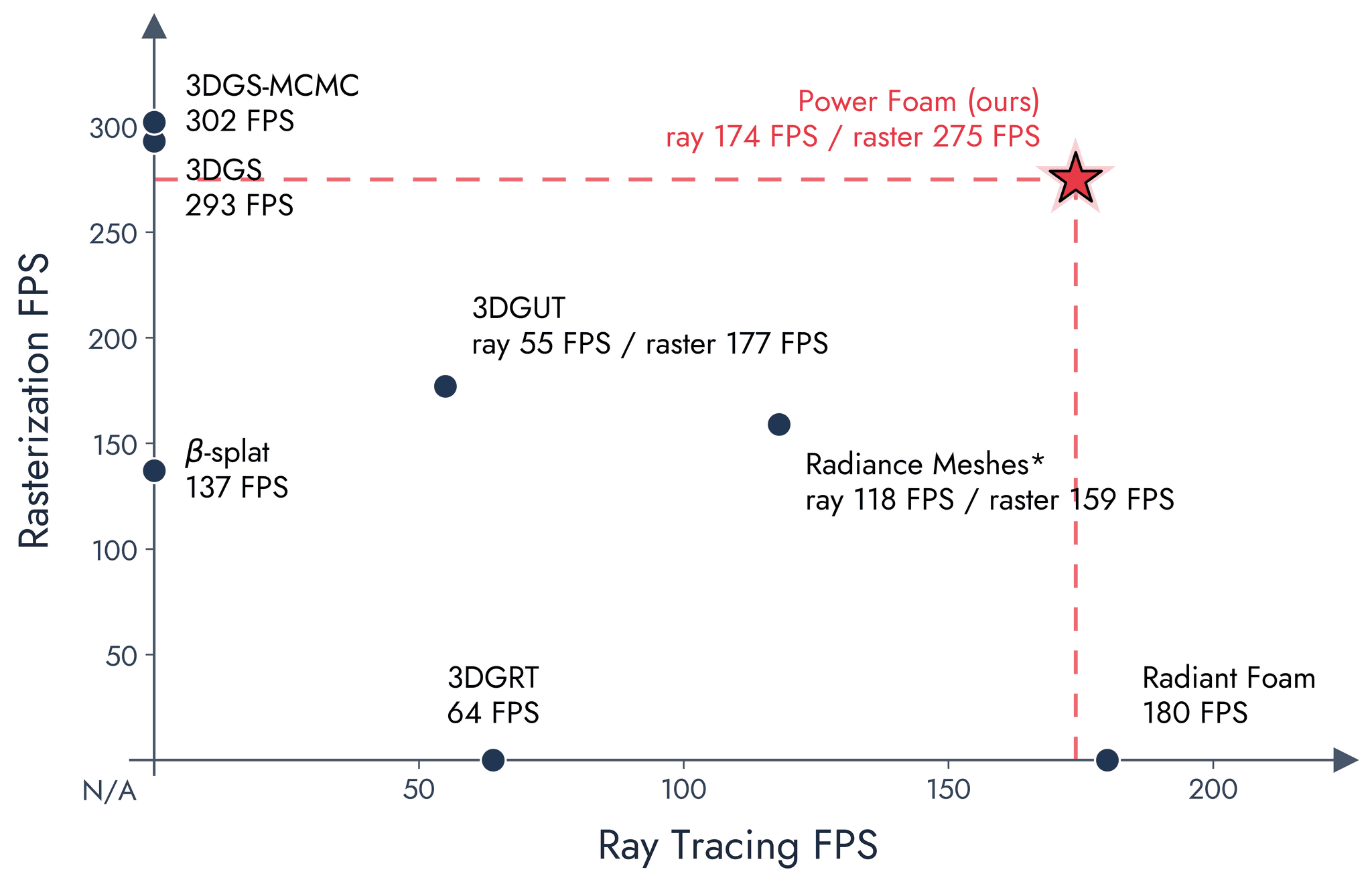

The world-model race in 2026 was supposed to be a two-horse contest between World Labs and the Chinese hyperscalers. Echo-2 says nope, a focused European team can hit the same notes with a different architectural philosophy — predicting full 3D representation in one shot instead of unrolling video and praying. The vendor-graph supremacy claims need independent benchmarking before we crown anyone, and we’ll happily eat our words once Radiance Fields or HuggingFace runs the numbers cold. But the design choice is the headline here: spatially persistent beats temporally hallucinated, full stop.

For 3D artists, archviz folks, and game devs reading this: the part that should keep you up tonight is the semantic-segmentation editing. Once you can prompt-edit individual objects inside a splat without breaking the rest of the scene, the line between “generated 3D content” and “authored 3D content” gets very, very thin. Echo-2 isn’t the destination. It’s a checkpoint that says: yeah, the post-NeRF, post-video world-model era is here, and there’s already more than one team doing it well.

Go play with it before the GPU queue gets ugly.