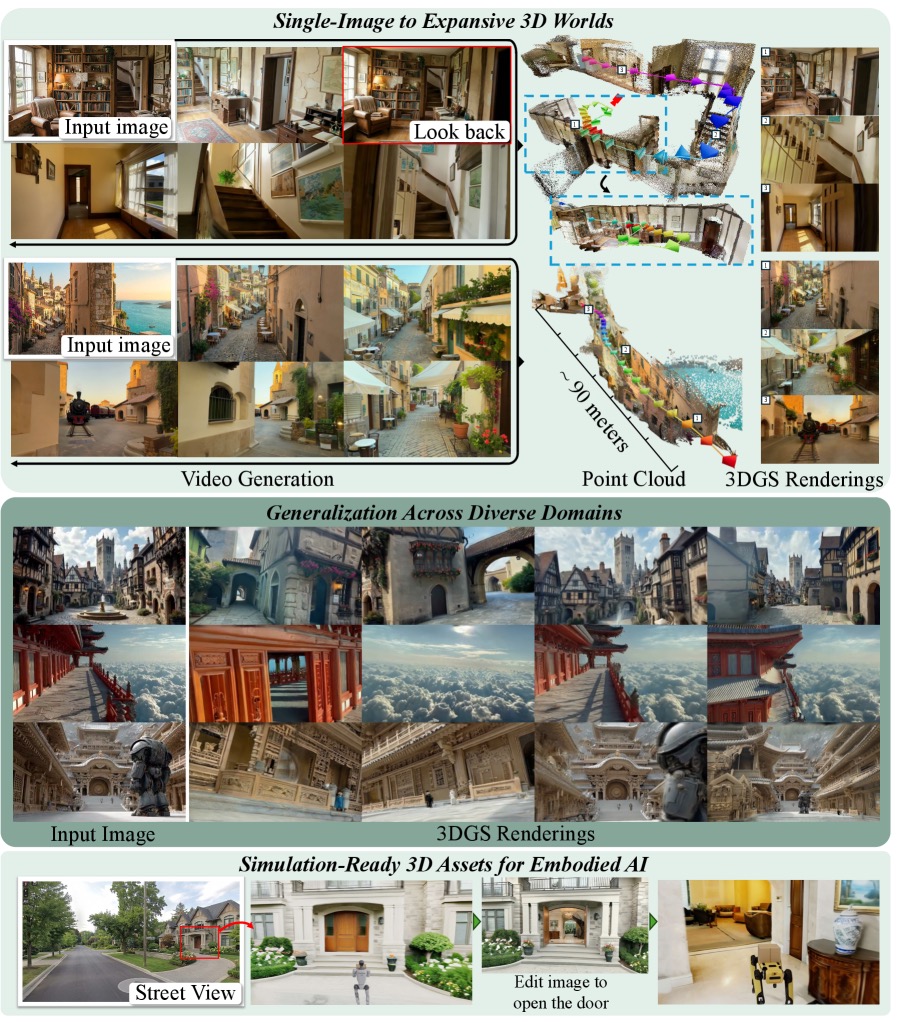

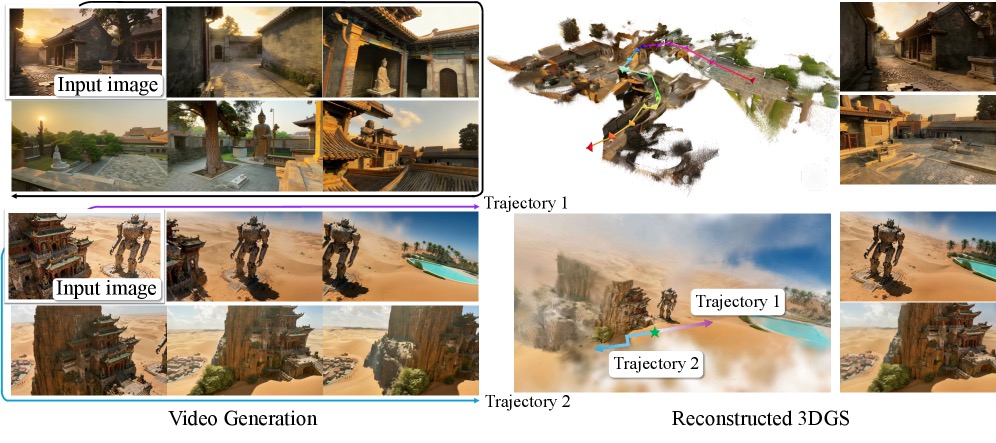

NVIDIA’s Spatial Intelligence Lab just dropped Lyra 2.0 — open source, published April 2026 — and it does something genuinely wild: hand it one photo, define a camera path, and watch it generate a persistent, explorable 3D world. Then export the whole thing as Gaussian splats or a mesh. Not a skybox. Not a turntable render. A walkable world.

The Story

The original Lyra showed that video diffusion models could reconstruct 3D scenes from generated video. Lyra 2.0 goes much further: it tackles the two hard problems that killed every previous approach at scale.

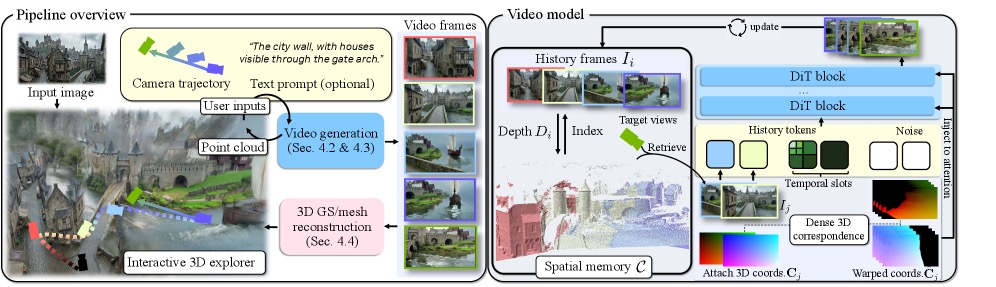

Problem 1 — Spatial forgetting. As you move a camera through a scene, older views fall out of context. Previous systems simply forgot what they rendered 30 seconds ago, creating jarring inconsistencies when you revisited a location. Lyra 2.0 fixes this with a spatial memory system: it accumulates per-frame 3D geometry as a point cloud, then retrieves the most relevant past frames whenever the camera revisits a region. Dense correspondences are established, and the generative model is shown those remembered views — so the hallway you passed through earlier looks exactly the same when you return.

Problem 2 — Temporal drift. Autoregressive generation is like a telephone game: small errors compound over time, producing color shifts, texture wobble, and geometry collapse after a few hundred frames. Lyra 2.0 counters this with self-augmented training — the model is deliberately trained on its own degraded outputs, teaching it to correct drift rather than propagate it. The result: scenes that stay coherent over 800+ frames, not just the first 30.

Under the hood, Lyra 2.0 runs on a Wan 2.1-14B video diffusion backbone — the same open-weight model powering some of the best video generation tools right now. The pipeline has two stages: (1) generate a long-range walkthrough video from your input image and camera trajectory, (2) lift that video into explicit 3D — either Gaussian splats or surface meshes — using a feed-forward reconstruction step.

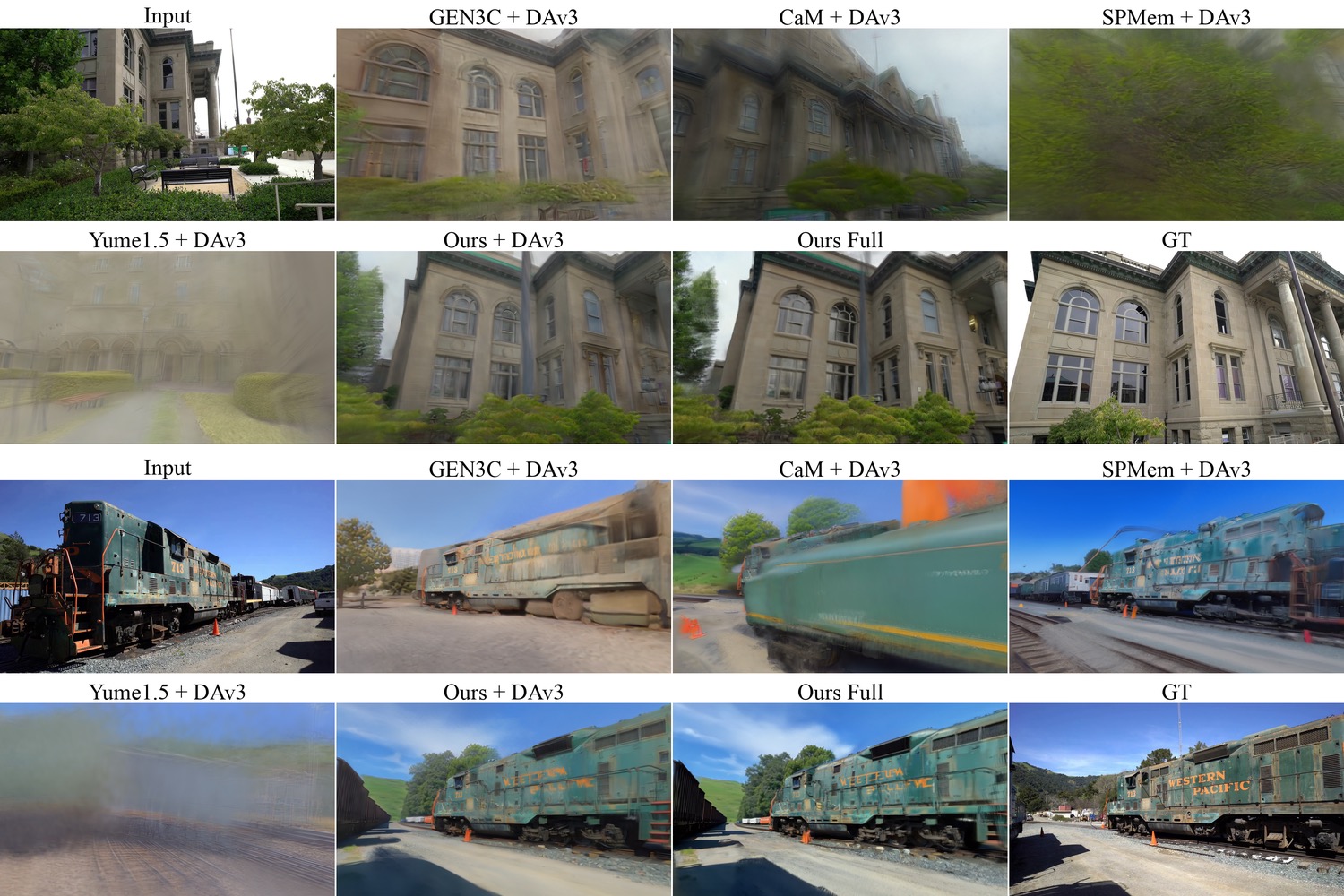

Performance specs are honest: the full model runs ~194 seconds per 80-frame segment on a single GB200 GPU. The distilled 4-step variant cuts that to ~15 seconds — 13x faster, and much more usable. On the DL3DV and Tanks & Temples benchmarks, Lyra 2.0 outperforms GEN3C, CaM, VMem, SPMem, Yume-1.5, and HY-WorldPlay.

Why You Should Care

The Gaussian Splatting export is what makes Lyra 2.0 actually useful for 3D artists, architects, and game developers. We’re not talking about a pretty demo — we’re talking about a pipeline where you take a reference photo of a real location, a concept art piece, or even a generated image, and turn it into a fully navigable 3D environment ready for import into Blender, Unreal Engine, or a physics engine. NVIDIA already demonstrated exporting scenes directly into Isaac Sim for robot navigation.

For architects: spatial exploration of a single mood render without needing a modeled scene. For game devs: rapid environment prototyping from concept art. For 3D artists: a way to get explorable Gaussian Splat environments from any reference image, no photogrammetry required.

Try It / Follow Them

- NVIDIA Research Project Page: research.nvidia.com/labs/sil/projects/lyra2/ — demos, videos, paper

- GitHub (Apache 2.0 code): github.com/nv-tlabs/lyra — open source, ready to run

- Hugging Face model card: huggingface.co/nvidia/Lyra-2.0 — model weights (research license)

- ArXiv paper: arxiv.org/abs/2604.13036 — full technical breakdown

Note: the code is Apache 2.0 but the model weights ship under an NVIDIA Internal Scientific Research and Development license — no commercial deployment, no distribution of generated works for sale. This is a research release, not a product. But for a maker running experiments, prototyping environments, or exploring the architecture for fine-tuning? The code is there, the weights are downloadable, and a distilled 4-step inference makes local experiments plausible on H100-class hardware.

IK3D Lab Take

Lyra 2.0 is the most technically credible answer yet to “what if I could just walk through my reference image?” The spatial memory system and self-augmented training aren’t just clever tricks — they solve the actual failure modes that have held back every world-generation approach for the past two years. The Wan 2.1-14B backbone means the community can fine-tune it, and the open code means someone will. Watch for fine-tuned variants trained on architectural photography, game concept art, and aerial imagery to appear on Hugging Face in the coming months. This is the foundation model the 3D exploration space has been missing.

The licensing caveat is real and worth knowing — but for research, learning, and creative prototyping, Lyra 2.0 is available right now. Go explore.