One photo. A forest clearing, a sci-fi corridor, a medieval courtyard. That’s all HunyuanWorld 2.0 needs to build a fully navigable, editable 3D world — complete with Gaussian Splats, meshes, and point clouds you can drop directly into Unity, Unreal, or Blender. Tencent just released it open-source. Today.

The Story

HunyuanWorld 2.0 (HY-World 2.0) is Tencent’s new multi-modal 3D world model — and it’s a genuinely different beast from everything that’s come before. While tools like Google’s Genie 3 or Meta’s WorldGen generate video sequences you can watch but can’t edit, HunyuanWorld 2.0 generates actual 3D geometry. Real meshes. Real Gaussian Splat scenes. Real editable world files you can load in a game engine and start building on.

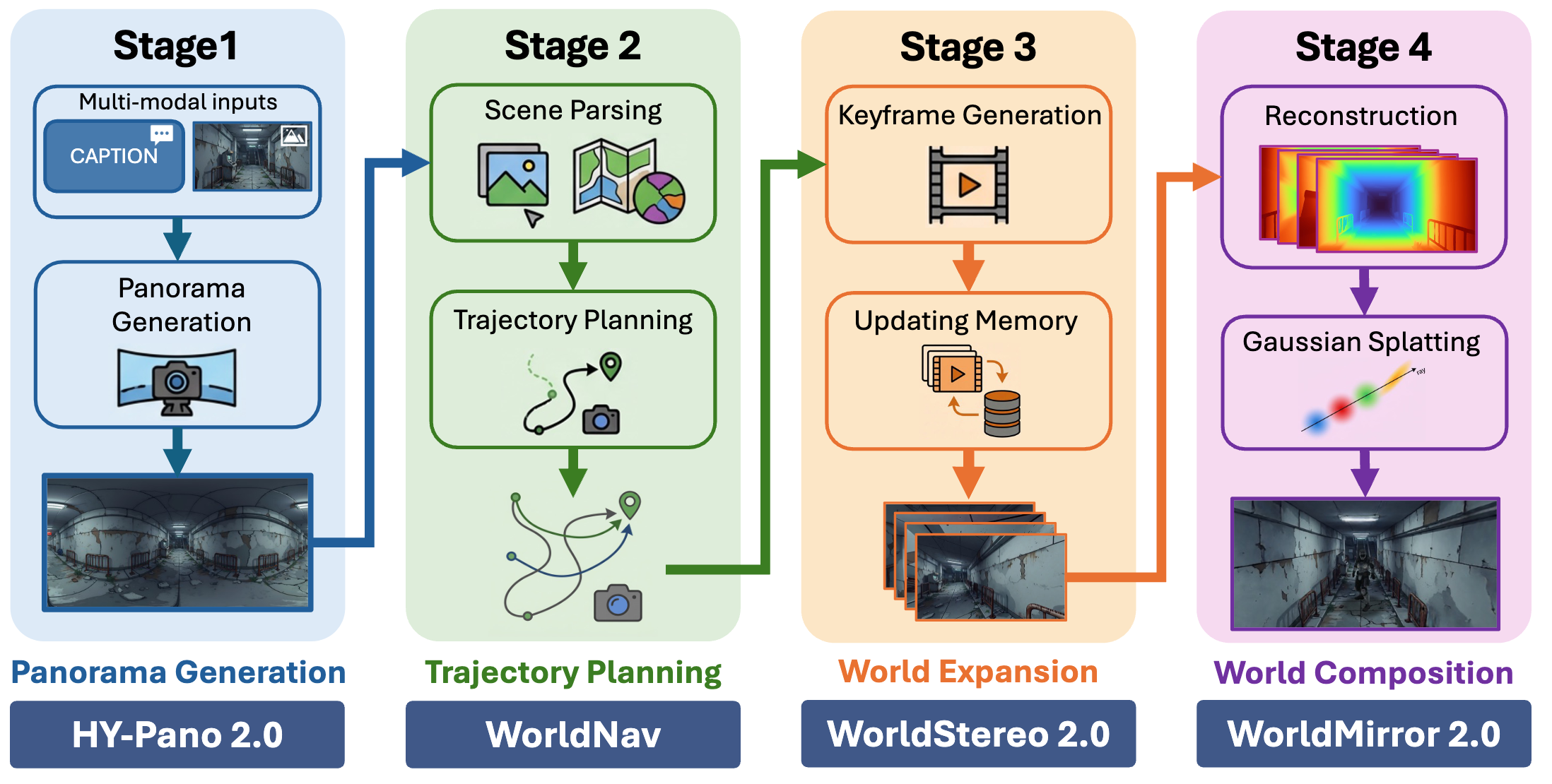

The model accepts a remarkable range of inputs: a single text prompt, a single image, multi-view captures, or even video sequences. Feed it any of those and it outputs a navigable 3D world through a four-stage pipeline Tencent calls the World Generation Engine:

- HY-Pano 2.0 — Generates a high-quality panoramic representation of the environment

- WorldNav — Plans the exploration trajectory through the scene geometry

- WorldStereo 2.0 — Expands the world outward from that trajectory with stereo depth

- WorldMirror 2.0 + 3DGS — Composes the final scene into Gaussian Splats and navigable meshes

The standout component is WorldMirror 2.0. In a single forward pass, it simultaneously predicts depth maps, surface normals, camera parameters, 3D point clouds, and 3DGS attributes. No multi-step reconstruction, no iterative refinement loops. One pass, complete 3D scene. The model supports flexible-resolution inference — from 50K pixels for fast previews all the way up to 500K pixels for full-quality exports — and the generated worlds include physics-enabled collision detection, meaning NPCs can navigate them out of the box.

Benchmarks back the quality claims: WorldStereo 2.0 outperforms SEVA and Gen3C on camera accuracy metrics across the Tanks-and-Temples and MipNeRF360 datasets. Tencent positions this as the first open-source 3D world model to match closed-source alternatives like World Labs’ Marble — the $1B-funded spatial AI platform from Fei-Fei Li’s team.

Why You Should Care

The AI world-generation space has been noisy — lots of impressive demos that produce beautiful videos but leave you with… a video. HunyuanWorld 2.0 flips that equation entirely.

You get files. Actual 3D files. The kind you open in Blender and start tweaking. The kind you import into Unreal Engine and set your character loose in. The kind you drop into Isaac Sim to run robotics simulations. This isn’t “watch a world render” — it’s “here’s your new level. Start building.”

For game developers, this is a major breakthrough for environment prototyping — rough concepts become walkable levels in minutes. For architects, it’s concept-to-walkthrough from a single reference photo. For 3D artists, it’s a fully formed foundation to sculpt on top of. For VFX studios, it’s location-scouting without a scout.

The Gaussian Splatting output is particularly interesting — you get photorealistic rendering quality baked in, with Splat scenes already compatible with Unreal Engine, Blender’s native 3DGS support, and any renderer that’s adopted the format since 2024. Combine that with the traditional mesh output and you have both the “stunning demo” and “production-ready asset” in a single generation run.

Try It / Follow Them

Everything is open-source and live right now:

- GitHub: github.com/Tencent-Hunyuan/HY-World-2.0

- Hugging Face models: huggingface.co/tencent/HY-World-2.0

- Live demo (image -> 3D world): 3d.hunyuan.tencent.com/sceneTo3D

- Project page + examples: 3d-models.hunyuan.tencent.com/world/

- Discord community: discord.gg/dNBrdrGGMa

To run locally, you’ll need the model weights from Hugging Face and a reasonably capable GPU. The inference is flexible — scale resolution from fast previews to full-quality 500K pixel exports depending on your hardware.

IK3D Lab Take

HunyuanWorld 2.0 is the release that forces us to rethink the definition of “world generation.” The field has been obsessed with video models that simulate worlds — but what creative technologists actually need are assets they can use. Tencent just gave us that. Open-source. Free. Today.

The combination of 3DGS output (photorealistic, engine-compatible) with traditional mesh output (editable, physics-ready) is clever — it covers both the “looks incredible in a demo” requirement and the “actually usable in production” requirement simultaneously. And WorldMirror 2.0’s single-pass reconstruction is genuinely impressive technical work.

The pipeline architecture — pano -> trajectory -> stereo expansion -> composition — is clean and extensible. Expect community forks to start adding more control points fast. And if World Labs Marble has to compete with a free, open-source alternative at this quality level? The 3D world generation race just got a lot more interesting.