Most image generators guess. They take your prompt, roll the statistical dice, and hand you whatever comes out. ChatGPT Images 2.0, released April 21, 2026, does something fundamentally different: it thinks. And for creative technologists, that changes everything.

The Story

OpenAI dropped ChatGPT Images 2.0 on April 21, 2026, quietly redefining what it means for an AI to “generate an image.” The model — accessible in ChatGPT under the API identifier gpt-image-2 — introduces two modes: Instant (the classic fast generation everyone knows) and Thinking (where the model actually reasons through structure, plans layouts, and self-verifies before producing output).

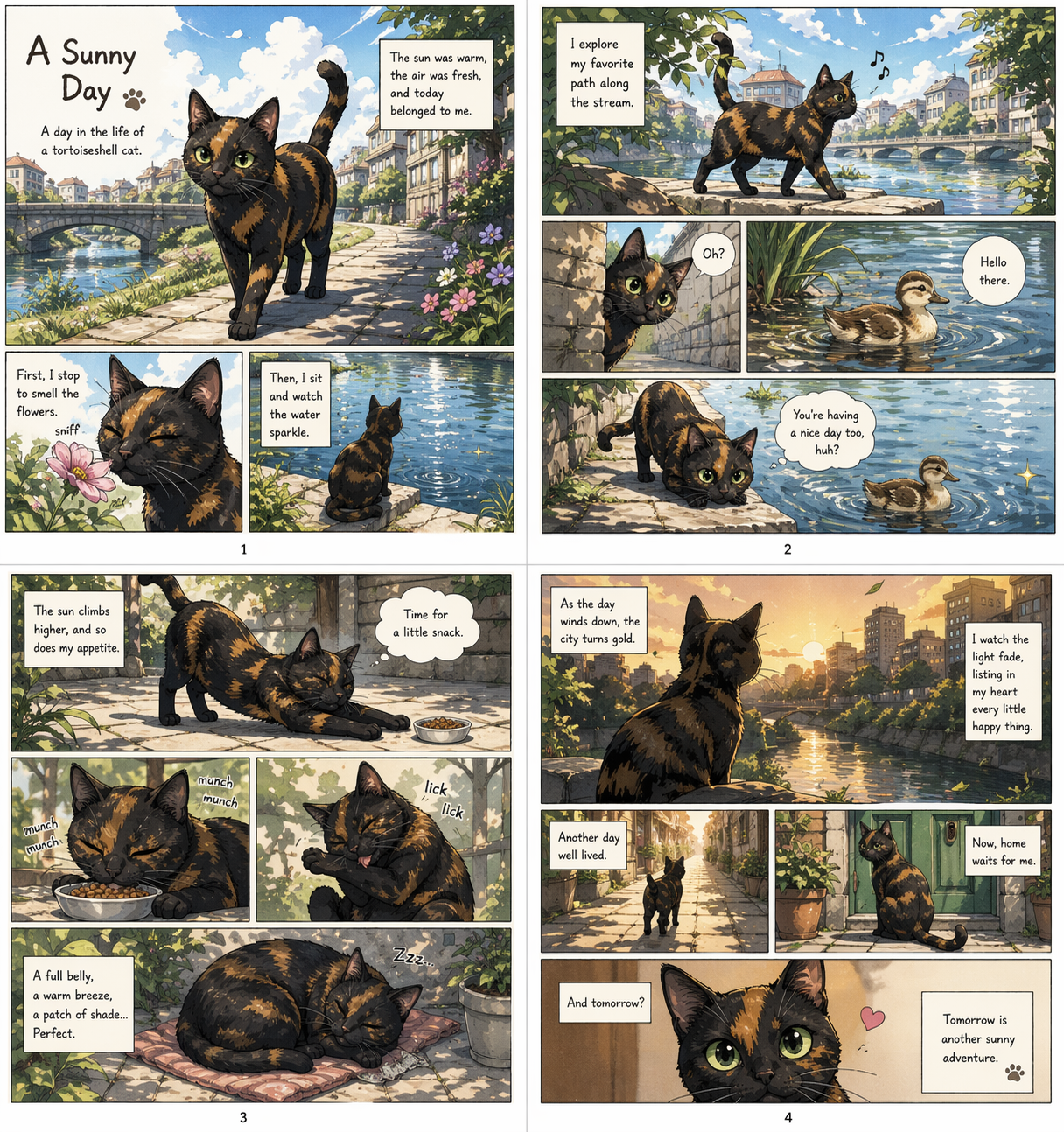

Thinking mode isn’t just a speed toggle. It’s a qualitatively different process. The system searches the web for reference data mid-generation, applies layout reasoning, and can output up to 8 coherent images simultaneously — maintaining character and object continuity across the entire series. That’s not diffusion doing its thing. That’s a reasoning engine coordinating a visual production pipeline.

Text rendering, historically the Achilles’ heel of image AI, is now genuinely usable. Where DALL-E 3 would hallucinate “enchuita” on a restaurant menu, Images 2.0 produces typography accurate enough for commercial deployment — and it handles Japanese, Korean, Chinese, Hindi, and Bengali as naturally as Latin scripts. The model supports output from 1024×1024 all the way to 2K resolution, with aspect ratios from ultra-wide 3:1 to portrait 1:3.

Why You Should Care

For our community — digital artists, 3D creators, game devs, comic artists — the implications hit in three specific spots:

- Sequential storytelling just leveled up. Thinking mode was explicitly designed for manga, comic strips, and storyboards. Eight coherent panels, same character, same art style, from one prompt. The blog covered how AI comics cracked character consistency back in March — Images 2.0 bakes that capability directly into a mainstream tool, no ComfyUI workflows required.

- UI and concept design prototyping. The model renders UI elements, icons, and dense compositions without breaking down. OpenAI is already positioning it inside Codex for UI mockup generation within development workflows. Architects and game designers can now prototype visual concepts at a speed that wasn’t possible before.

- Multi-format campaign generation. Eight images per prompt in one shot — wide banners, social posts, mobile screens, posters — all visually consistent. For studios producing asset packs or creative directors managing cross-platform content, this is a genuine time multiplier.

The thinking limitation is real though: Thinking mode introduces 15–30 seconds of latency per generation. For a live creative session, that pause is noticeable. For batch pipeline production, it’s irrelevant.

Try It

In ChatGPT: Available to all users (free and paid) starting April 22, 2026. Instant mode is accessible on all tiers. Thinking mode (with multi-image batching, web search integration, and layout reasoning) requires a ChatGPT Plus ($20/mo), Pro ($200/mo), Business, or Enterprise subscription.

Via API: The gpt-image-2 model is live in the OpenAI API. Pricing by resolution at 1024×1024: Low quality at $0.006/image, Medium at $0.053/image, High at $0.211/image. Token-based billing also applies for complex edits: $8/M input tokens, $32/M output tokens.

from openai import OpenAI

client = OpenAI()

result = client.images.generate(

model="gpt-image-2",

prompt="A 4-panel manga strip: a robot mechanic repairs a hover-bike in a neon-lit alley, same character in each panel",

size="1024x1024",

quality="high",

n=4 # up to 8 in Thinking mode

)

Also available via fal.ai for quick no-code testing and chatgpt.com/images for the full conversational workflow.

IK3D Lab Take

The industry keeps chasing “better diffusion” — sharper outputs, faster inference, more LoRA styles. OpenAI took a different turn: they applied the architecture of reasoning models to the image generation problem. The result isn’t just a prettier output. It’s a different process.

For anyone doing sequential work — comic artists, storyboarders, game concept artists — this matters more than raw image quality. Eight consistent frames from one prompt is workflow-changing. The text rendering breakthrough alone unlocks use cases (infographics, UI mockups, international localization) that were previously duct-taped together with post-processing tricks.

The 15–30s thinking latency is the honest trade-off. This is not your fast iteration tool. It’s your “give me the full scene series” tool. And in that role, it’s currently unmatched in a mainstream accessible package. Watch for FLUX Kontext and other open-source alternatives to respond — but right now, for comic and sequential art workflows especially, gpt-image-2 in Thinking mode is the one to test.